Images courtesy of SentiSum

Customer support leaders are often up against the same problem: Support tickets come in high volumes and are usually unfettered, qualitative text that can be analyzed for friction points. Thus, support logs are one of the most important (generally untapped) sources of customer insight a company is sitting on.

These insights could actually be driving serious business growth—through retention, loyalty, and product improvement. If other teams took these insights seriously, companies could reduce support tickets while simultaneously increasing customer experience.

Customer service leaders might say, “But, I know this, it’s just hard to get others to listen. It’s even harder to get them to take action on the insights I send them.”

It’s trope-worthy but true, that “insight without action is just information.” No one wants to be a source of meaningless information, so how do you become a source of action?

“[Actionable insights] drive the right focus for product direction and digital services. Most importantly, it allows leaders to pivot customer service from being a low-value cost center to a higher value, commercially focused, business-aligned unit.” – Sharad Khandelwal, Support-Driven Growth Ebook

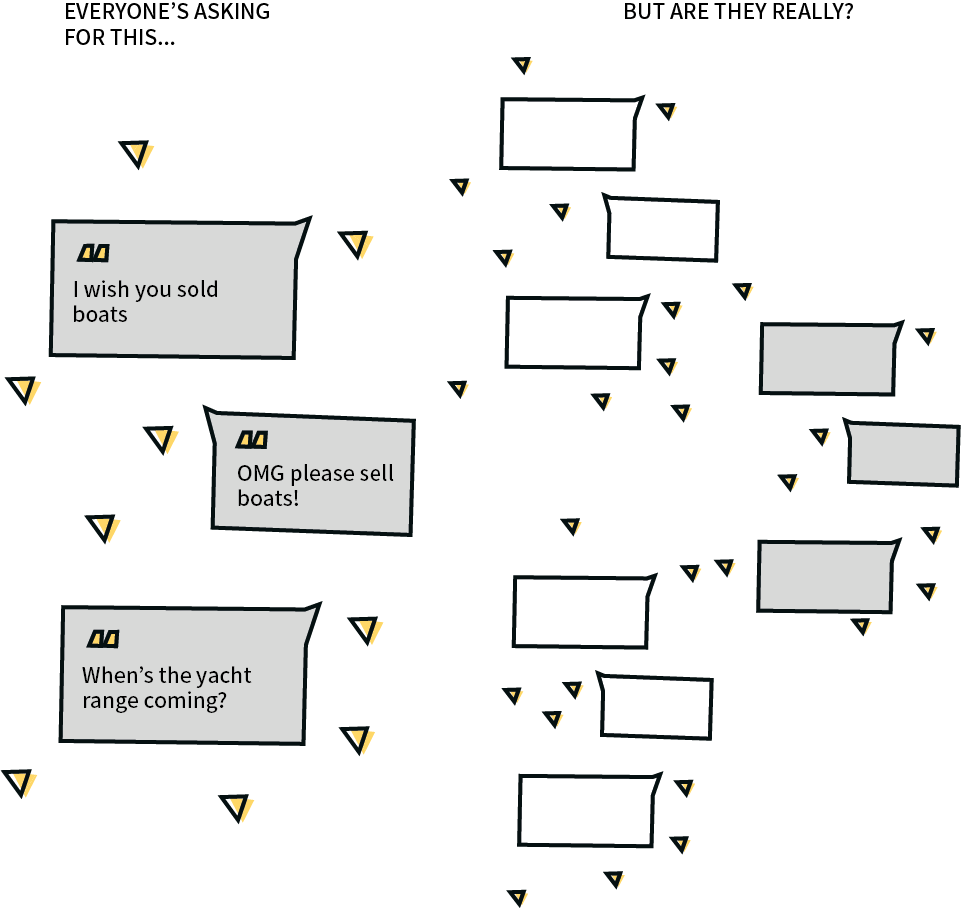

To get others to take action on the insights you provide, you need to deliver insights that are deemed ‘actionable’. Our diagnosis of why ‘insights’ are often ignored or sidelined by other departments teams is that they are mistrusted or lack context.

Trust and context come down to these two questions:

To answer these questions, here are six tenets of actionable insight. They’ll make sure your customer’s voice is heard and your customer insights will drive business improvement.

When providing information about a customer pain point, those taking action on it need to know how important it is.

Some insights cause more customer friction than others. Some lead to instant customer churn, while others can wait. Some affect a lot of customers, others only affect a few.

There are a few ways to contextualize customer insight. One is volume: how many customers mentioned this problem? Another is the sentiment: how angry did this problem make customers feel? And, another is by tying it to outcomes data: did customers who mention this problem spend less or churn faster?

This kind of analytics is no easy feat, but allowing others to understand how important each friction point is sure to get them to take action on it.

Insightful customer feedback says something new and useful. Simple statistics like a CSAT or Net Promoter Score can be useful for monitor trends and performance, but alone they don’t give us something new to work with. CSAT, backed with qualitative data (from a free-text field or support chat) that show what’s driving the score, tells us the way forward. Here’s an example:

The insightful data can be used to make the payment process easier for this group of customers which in turn increases retention and drives real business value.

The fresher the information the better. Companies will often run voice of the customer programs for a couple of months, then take a couple of months to filter through the qualitative feedback, just to deliver customer insights six months later. The speed at which shopper needs change makes this knowledge generally obsolete—by then the harm to consumer loyalty could be done.

We suggest looking at improving speed to insight by tagging ‘reasons for contact’ in support tickets. The high volume nature of customer support tickets means there’s an opportunity to respond to trending topics proactively. Using advanced technologies like natural language processing, companies are already running real-time analytics to tag and sort customer support—allowing them to get a fast speed to insight that cross-functional teams can take advantage of to tackle churn.

Granular insights mean getting to the nitty gritty of what went wrong for the customer. Customer feedback surveys are often not actionable without a further root cause analysis; answers are often too high level or generic.

Take this example of an eCommerce retailer. Non-granular insights might look like, ‘customer couldn’t checkout’. Whereas, a more actionable, granular insight looks like, ‘PayPal isn’t working’. With granular insight like this, product and operations teams can tackle the problem head-on before other customers are affected.

To uncover granular insights, we suggest using multi-level data tagging, for example, “Checkout problem” → “Payment Issue” → “PayPal Not Working”. Hierarchical tagging of customer tickets, feedback and reviews allows any user to start at a high level and then dig deeper into the root cause of the problem.

Live chat logs, interviews, reviews and free-text survey answers give qualitative customer feedback, which is considered to be more more granular and insightful. It’s easy to get hung up on quantitative measures like CSAT or NPS, but they’re likely non-insightful without understanding the descriptive drivers.

Time and time again, however, qualitative feedback can struggle from robustness. It takes a lot of time to sift through and usually only small sample is taken—some consider manual analysis subjective and the small sample in danger of bias. How can we make large business decisions without trusted, statistically significant evidence?

Survey bias is well-researched. If your surveys encourage a particular answer or are answered by a particular group, that data could be seriously misleading.

Any type of bias that has crept into your customer feedback analysis results should make you wary to report them. There are two main

buckets of customer survey bias to avoid so that you don’t fall into the trap of basing business decisions off of skewed survey results:

Response bias, where there’s something about how the actual survey questionnaire is constructed that encourages a certain type of answer, leading to measurement error. One example is when a questionnaire is so long that your customers answer later questions inaccurately, just to finish the survey quickly and claim their reward.

Selection bias, where the results are skewed a certain way because you’ve only captured feedback from a certain segment of your audience. We see this frequently with customer surveys because when on average just 1% of customers fill out your survey, we must question who that 1% is. If they have similar characteristics then your results are no longer representative.

Bringing it all together

Following these six rules will improve the quality of the customer feedback you and your team report. We have a real opportunity in the customer insights we deliver—If we deliver them consistently, in an actionable way, the contact center will quickly contribute to business improvement and begin to cement itself as a critical revenue driver.

—

Ben Goodey is marketing lead at SentiSum, a customer analytics software that brings artificial intelligence to customer support tickets, reviews and surveys. Their platform uncovers granular customer insights that drive customer-centric change.